Yiding Hao, Dana Angluin and Bob Frank publish paper in the Transactions of the ACL

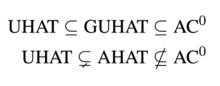

PhD student Yiding Hao, CS faculty member Dana Angluin and Linguistics faculty member Bob Frank have published an article Formal Language Recognition by Hard Attention Transformers: Perspectives from Circuit Complexity in the Transactions of the Association for Computational Linguistics. This paper sheds light on the expressive power a recently developed but widely used class of neural network models of language, the Transformer. Using tools from computer science that assess complexity in terms of the type of abstract circuit that is needed to solve a certain problem, the article demonstrates that “Hard Attention” transformers can characterize only formal languages in the class AC0, a class that is quite weak relative to what is needed to characterize natural language. The methods developed in this paper open up new approaches for the study of neural network models, and have already been built upon in work by former Yale Linguistics undergraduate, Will Merrill. In July, Hao also presented this work at the Annual Conference of the North American Chapter of the Association for Computational Linguistics (NACCL).